Is doing a video where you interview yourself with an illusion that both of you are actually on the same space possible by home means? Yes. Can you do it without research (the google/bing kind ”research”) and instructions with modern technology? Yes. Can you do it alone? Yes. What do you need to do it? Time, free and paid software, good plan, good organization and low budget hardware. Will it be any good? Not this time, but next time it will be better.

This article will tell how I did my first try and what I learned from it.

You can find my first try from my youtube channel.

I see double

It is rather intuitive to think that you just shoot yourself first with in one character – in this case as Mai Roihu Olivia – facing one way and ask the questions. Then you shoot yourself as the second character Regina Rex facing the other way and answer the questions.

I intuitively recognized that I need to react to the answers Regina gives as Mai and to the questions Mai does as Regina in a timely manner to create the illusion. And to do that I needed to hear the voice of my other character when saying my lines as the another.

To do this I wrote the script, split it for both parties then read it aloud to my proper microphone (snowball) on my desktop with voice mannerisms of both characters. I’m calling myself a character as well in this context. I’m just trying to be honest. I am much more less aware of my voice and mannerisms when I meet you in the person – unless I try to hit you. And I’m also a chameleon, I pick up some of your voice expressions and style of talking and reflect it back. Regina doesn’t she just is.

I split the audio to two files as well and exported them as mp3-files. I did all of the audio work in Audacity I had actually watched some basic tutorials on Audacity at the autumn but I had already used Audacity several years while never actually bothering to learn how to use it. From those tutorials I didn’t learn much I hadn’t already figured out by doing. Note to the self: find out how you can remove echo and watch some Audacity tutorials that explain the things in the UI you don’t actually understand and haven’t yet needed for anything. Learning by doing is fun but not when you are in a hurry. And also knowing people who know more about audio isn’t enough, you have to pick their brains. It is also scary to realize that to actually pick their brains you need to level up first.

What should have been intuitively clear but wasn’t and even if it was, was lost during the cutting. The timing will be screwed anyway. As I was not lip syncing to my original audio tracks the tempo and delivery time varied. It varied even more when I moved my body. While recording the original script I stood before the mic and tried to keep the distance constant, while watching the script on the screen. When I sit at the chair I will gesture and move, which changes the delivery speed. And when you screw the timing you need to add footage to the other character where she does nothing but listens intently what the other character is blabbing about. As I had several silent moments for both of the characters where I just waited for the prompter to get into the right place and the sound track get to the point where to talk I could have used that. But the process that I use for cutting the video sent all of that on the first stage to the trash bin. And on the second stage there was no ”loose” video left to copy to fill the empty spaces. Regina doesn’t just sit still, she is present in every moment. This causes Regina to vanish to the thin air and reappearing on the ”final” video. The character Mai also moves and reacts whole time, so there wasn’t much to use on the second cutting stage to fix timing mistakes.

The second thing that should have been intuitive is that I only have one bar stool that both characters share. I should have kept footage of empty stool to be used while Regina was entering.

What I should have done, I should have cut the Mai part to scenes and taken the audio out of the clips and replayed the questions to Regina while recording her. Then I should have again cut the audio out of Regina’s responses and recorded Mai listening Regina’s responses.

Sound problems

I have several bluetooth earpieces and I should have used them as monitors and also as voice cues. But as they have all been paired with my phone I decided against that – just play the voice cues from the bluetooth speaker and later cut them out with Audacity. Ok, that worked somewhat but I missed some and they can be heard from the final video either as faint additional echoes on those very rare cases where they kind of match actual deliverance and in some cases they are faint mumbles on the silent parts of the video.

I tried to speak clearly and deliver with greater voice than I usually do and the built in microphone on the phone did it’s best which wasn’t enough. Had I used a monitor I would have caught some cases where I could have changed my pitch, tone and delivery direction to avoid disasters. So, to actually do quality audio you need a monitor. In my case it should have been in ear version. And one definitely needs a separate microphone, preferably above the green screen so that it doesn’t show up in the final production and it doesn’t get the clacks of my varied heels on the floor. I could also use carpets to muffle the echoes and some other fabrics but those are beyond my meager means.

To use a monitor and separate microphone will provide some more challenges. You need a studio software and hardware to support that. My favorite piece of software for doing videos is OBS Studio. Unfortunately it doesn’t have Android version and Ubuntu virtual machine inside my Chromebook doesn’t easily support it. It is such a good piece of software that I will most likely buy a laptop capable running Linux as my second laptop. I truly like my Chromebook – so far it has been able to cater for all of my needs and it didn’t cost much. I’ve used Linux as my main operating system since 1990’s, I’ve tried OS X and used my employers Windows servers and I see no reason to switch. OS X is too expensive and Windows – well, no. Windows is a constant source of frustrations and nothing actually works without opening your wallet time after time.

Studio space related problems

The only thing almost professional on my studio is the green screen. But I’m too lazy to iron it and fix it properly so it doesn’t count. The second screen on the picture – showing the blue side outward is just stored there. I use it when I do desktop videos. The white fan at front of the picture serves as stand for the Chromebook which is used as prompter. The prompter software that I use is Elegant teleprompter.

The thing that is required to be almost constant while doing studio shots is lightning. The reason why I didn’t redo some shots is just that. I use the natural light that comes from the window not the one that hangs from the ceiling. That is something I will change in coming weeks. I have also one construction site lamp whose light I bounce from the ceiling or from the walls. It takes from two to three hours of makeup and preparation to do Regina. Mai’s makeup, clothes and hair takes less but there are just so many hours of light – good light – that one can use. That is why it is vital that you take everything you need for cutting as fast as you can and try to replace quality with quantity.

Camera work

I use my Samsung Flip 4 as a my only camera and as it is on pedestal, operating it is hard. I have nails which makes working the camera while mounted even harder.

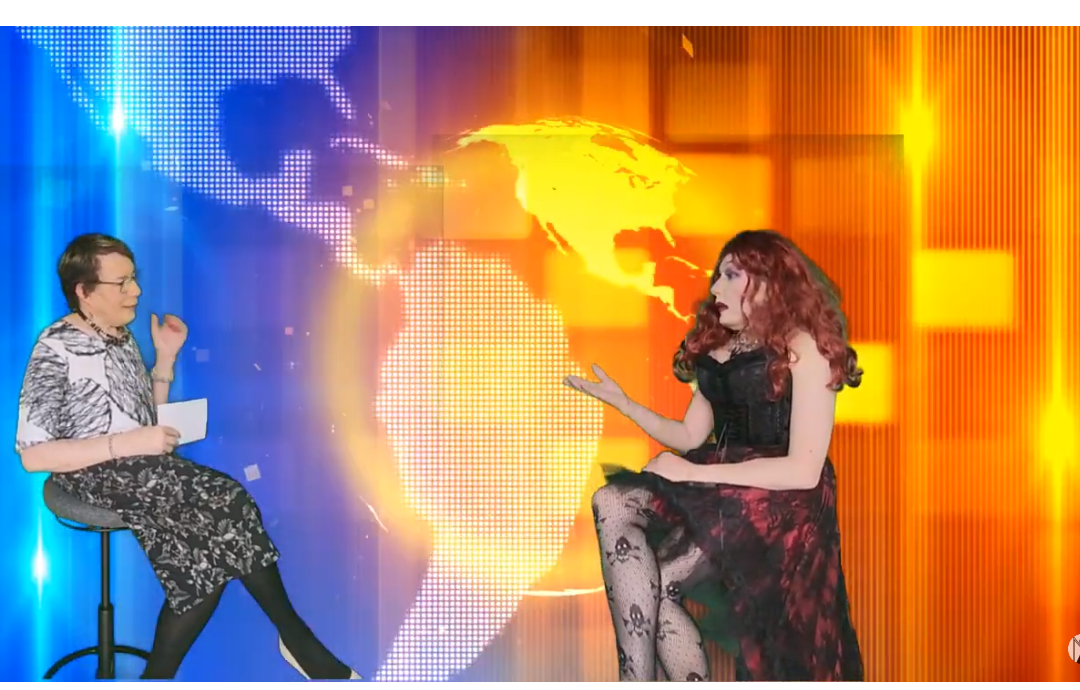

When my old Asus Zenbook – a device I dearly loved, rest in piece – was alive I used OBS Studio and Android plugin to send the videostream from the phone to it and I would use it’s screen as video monitor to see what I was recording. Now I just point the camera towards to the green screen, hit record and hope for the best. I don’t know if my limbs fit to the screen or if I’m even standing front of the green screen while I deliver my lines. This is actually harder than you think. I’m both an actress who merges with the character and becomes her/him/it and also memory machine who buffers lines pre and post delivery. The person I have become reacts to to the outside world and feels thing but when no external clues are available the buffer takes over and feeds emotions, words and motivations to the character I have become. The character I have become doesn’t see the green screen, she sees the other person in the studio and talks to her even when she actually isn’t there. You can see what it can lead to from the image ”Regina enters halfway”.

To avoid that happening you have studio director to instruct you where to be and what you should be doing and saying. Failing that you should have a camera operator that says that you are out of the frame. And when you haven’t anyone you should have video monitor to see where you are and picture script / shoot plan to follow. Again you can do the monitoring with OBS Studio. And shoot plan can be a simple mind map on Mindmup or todolist on some todo-software. I know this very well and yet I didn’t do it this time either. In my hubris I just kept all of that in my head which lead to the fatal problems in the cutting of the video.

Cutting the video

My main operating system for my computers is Fedora Linux, most of the time the very latest version on the very same day it comes freshly baked oven steaming out. This leads to things breaking and misbehaving until weeks of fixing and googling. One of the notoriously brittle pieces of software on Fedora platform is Openshot. It is easy to use and easy to crash – there are versions that seem to be stable and then bite you in the ass in the most critical moment.

Openshot also eats resources like children devour unlimited ice cream buffet. Sooner or later it freezes and you find yourself listening the machine fans complaining for stomach ache . The user interface has also some interesting translations and unintuitive features that are not the most user friendly to use. Mostly because of the unstability and to have something that I can use with every piece of hardware I own I bought yearly license on WeVideo. This brings another challenge. You have to upload your video files to the cloud and after the upload video conversion starts which can take a long time complete.

Another thing that is challenging is precise cutting of video files in WeVideo, so I do precut on my desktop with Openshot – which is also pain in the ass on precision due problems of preview freezing and dropping sooner or later out of sync. On the precut version I remove everything that is unnecessary for the story. When I shoot video I take duplicates and variations of the scene until I feel it went fine – again a director could give you feedback or the camera operator – when to stop taking the same again. When the cutting starts the light has gone and makeup has been removed – so no redoes.

There can be several files from the camera that are timestamped. The clever person goes through every shot and renames the files and compares them to the picture / shoot script and chooses best versions of the files for later editing. Person with hubris keeps the script in the head and just trusts herself to be capable of remembering which files she has already processed and which ones she hasn’t. This is fatal when the person in question suffers from acdc and is impatient as a child waiting for the Santa Clause.

The Openshot is in my experience really bad when doing anything with the audio except muting the video and adding external sound track. If you do anything with the audio it is most likely losing bits and pieces here and there and in some worst cases sound becoming out of sync with the video. This is why I use ffmpeg to split video and audio stream to separate tracks. Then I import the audio to the Audacity and do what I can. This is done after first precut. So my whole cutting process should be:

- Choose video files you will use and rename them to describe (this I skipped again) the contents, add them to the spreadsheet where you record the name, description and relation to the photo/videoscript

- Edit the chosen video files on the openshot and leave black space between scenes for easier recutting on WeVideo. (this I skipped again)

- Export the video from Openshot as mp4 file

- Use ffmpeg to separate video and audio

- Import the audio to the audacity, clean it and amplify

- Export the audio from Audacity as mp3 file

- Import everything to WeVideo

- Build and combine

As I didn’t do video/picture script and had several files, I missed scenes on the precut stage and as I also had audio goof ups I ended uploading incomplete files to the cloud sever several times and finally gave up. The final video is missing some pieces which can be seen.

The process is solid – when followed to the letter and the tools are adequate – there are some artifacts on the video stream that are features of the WeVideo – I should consider switching to cloud base Adobe as every one and their dog has done. But I have several traumas from Adobe software from the days I used to be editor in chief and most of the rest of the roles for a student’s club magazine. I used all macs in the university city center mac class – there were four of them – to make the magazine as the Acrobat froze the macs for ages while doing it’s things. Later I got windows version with student license on my own hardware which made life a bit easier – remember that I don’t use windows. And Adobe was also responsible for Flash which caused problems to the end users and was unlimited source of pain to support.

Final thoughts

Makeup. Regina’s looked horrible on the mirror but I trusted the contours and colors to pop out nicely on the video. Mai’s was my regular get out of the variation which become the real nude version on the video. It is not only drag or theater makeup that needs volume but the tv/video as well. Next time more is more.

I had fun and as I am not very happy for the quality of the final product but wish to share it, I decided to present it as an instructional example.

Love,

Mai